Making-of: Olga Bell – ATA

I made the video for “ATA”, taken from Olga Bell‘s LP.

Although there are many hidden concepts and contexts we had discussed, however, it doesn’t need to be explained. I’d like to write only a few technical notes for me, or perhaps someone who wants to know such niche techniques.

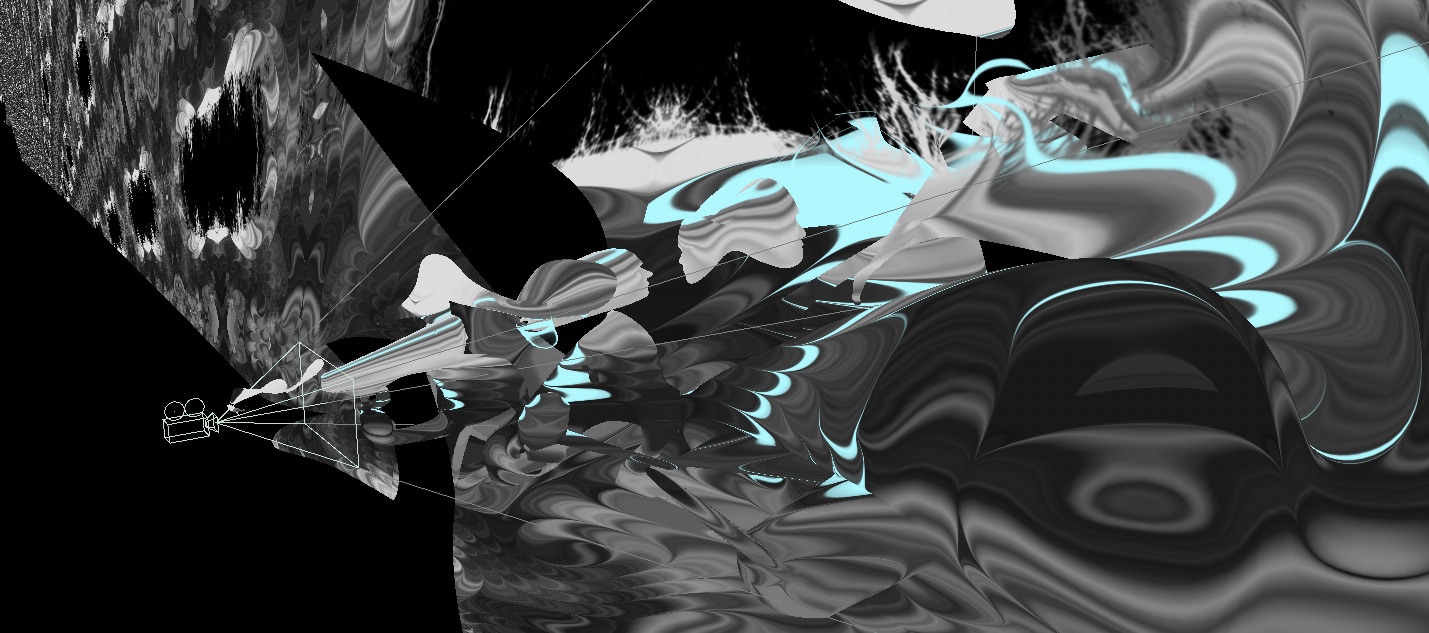

3D Matte Painting

Although the video perhaps looks like 3d scanned like this, or this, I actually modeled all the objects by hand using a basic technique called “3D matte painting”. I simply projected the photos taken by Josh Wool to the whole scene and modeled an object so that its perspective bends weirdly against a rule of thumb. I used Cinema4D and X-particles plugin for modeling, and Photoshop for decomposing into layers. (Just applied the “content-aware fill” for the backside of the layer lol) FYI, I inspired by reverse perspective paintings, Felice Varini’s works, and some topological shapes like Calabi-Yau manifold.

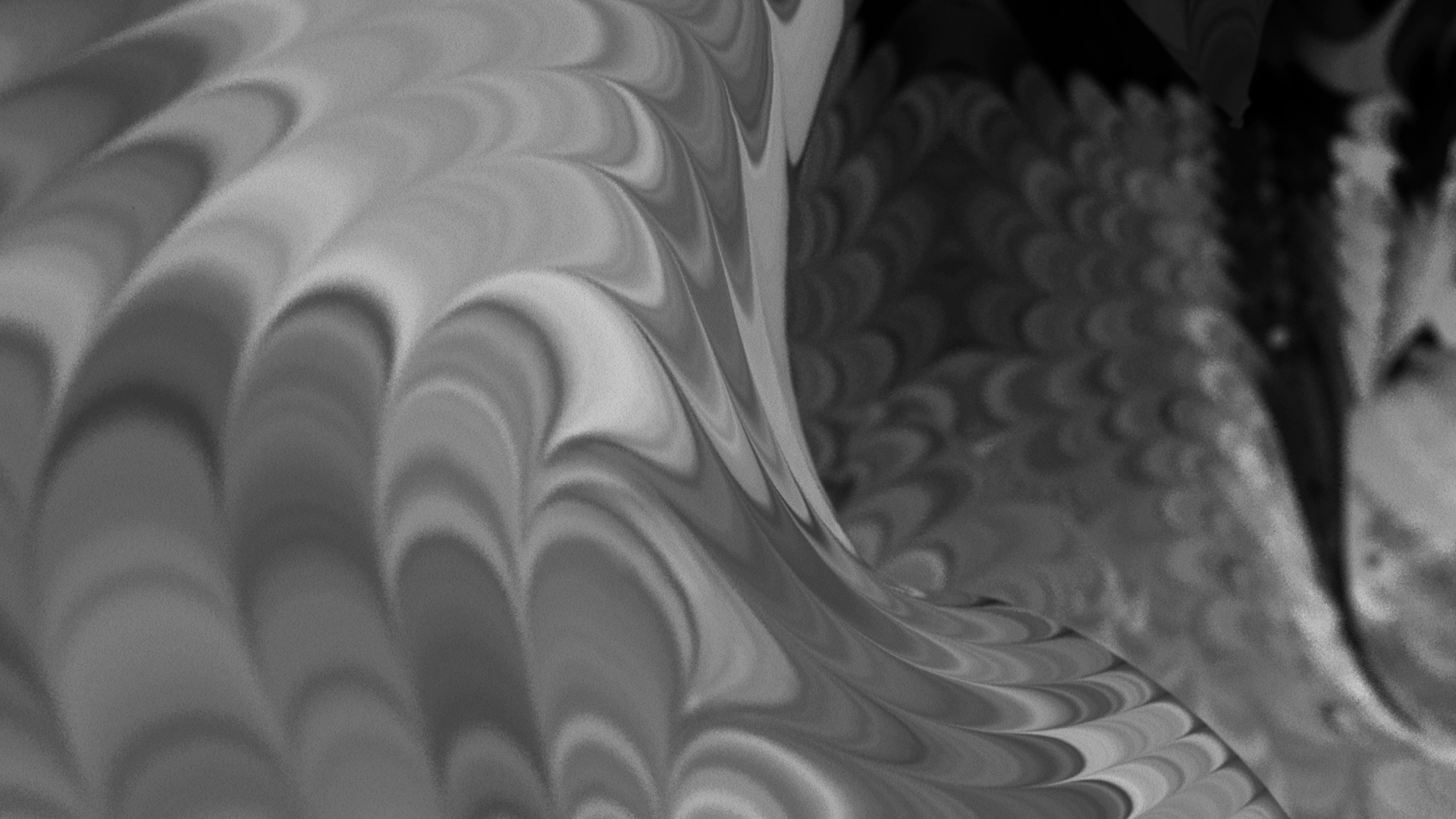

Melting the Texture using oF

I’ve experimented with generating an abstract gradient with bending daily taken photos, which I personally call the method “Feedback Displacement“. It repeatedly warps an input image a little and then makes a pattern like hand-marbled silk. I had performed it as Vjing a few times this year, and I’ve wanted to introduce this technique to normal video-production.

I built the simple system to integrate C4D with openFrameworks so that my ofApp works as if it’s a sort of external renderer for C4D. The app exports sequence images syncing frame with C4D, and it can be set as an animated texture in C4D. The flow is below:

- C4D sends a frame number and current parameters (warping speed, angle, and opacity) via OSC

- oF receives the message then applies a displacement effect to the current frame.

- Save frame as needed.

- oF sends back a message “just done rendering” with frame number via OSC.

- When receiving the above message, C4D increments the frame then returns to (1)

The advantage is you can cherry-pick the advantages of both a timeline-based method and a generative approach. C4D and some general video-production software are not good at a kind of “difference equation”, which increments a difference to a previous result repeatedly. It is because such an app needs to be capable of multi-thread rendering for a tremendous amount of calculation. So any frames not allowed to depend on another frame’s status as long as baking the result for each frame. But creative coding approach realizes it very easily.

On the contrary, code-oriented tools are not good at timeline-based processing like making a music video. Although part of digital artists uses VEZÉR or Ableton Live as a sequencer, I decided to do with C4D directly since it’s the most simple way I guess. Incidentally, this idea originally comes from Satoru Higa‘s experiment.

I uploaded the source and project file, though they’re quite messy.

I’ve been always curious about “making new ways to make” as much as an artistic or visual aspect. This quoted from Masashi Kawamura‘s word about his concept making (and originally comes from Masahiko Sato). However, for me, as a designer and coder, it is “designing new workflow and tools to make”. Even I don’t know what I want to mean myself lol but I want to continue experimenting.